Table of Contents

Support Vector Machine Software

Early Support Vector Machine Solvers

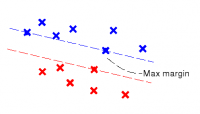

Support Vector Machines (SVMs) were discovered in three steps:

In the sixties, Vapnik and Learner propose the Optimal Hyperplane classifier.

In 1991, Boser, Guyon and Vapnik add the Kernel Trick.

In 1994, Cortes and Vapnik finalize the modern Soft Margin formulation of SVMs.

Support Vector Machines (SVMs) were discovered in three steps:

In the sixties, Vapnik and Learner propose the Optimal Hyperplane classifier.

In 1991, Boser, Guyon and Vapnik add the Kernel Trick.

In 1994, Cortes and Vapnik finalize the modern Soft Margin formulation of SVMs.

The second step happened while I was working at Bell Labs. We were all very excited. Bernhard Boser's first implementation of SVM was derived from ideas suggested in Vapnik's 82 book. That involves considering all pairs of examples belonging to different classes. I wrote the second SVM implementation, named GP2 for Generalized Portrait version 2. This was the first implementation using the standard dual formulation of the SVMs with an equality constraint. The GP2 optimizer was using modified gradient projection with chunking and conjugation. This code was later used as a starting point by Corinna Cortes.

The Royal Holloway Support Vector Machine

Around 1997, we decided to contribute a quadratic programming optimizer for the Royal Holloway Support Vector Machine code. The GP2 code was running inside an early version of Lush. I quickly rewrote its core in C++ and renamed it SVQP. Jason Weston and Mark Stitson then implemented sophisticated chunking stategies around the “Bottou” optimizer. SVQP is still available in the Lush CVS repository. This is no longer a state-of-the art SVM solver, but it can be handy for solving non quadratic convex problems in moderately large dimensions.

The SVQP2 solver

Support Vector Machine solvers are now much faster than these early codes. John Platt's SMO method was a big improvement. Around 2003, I decided to implement SVQP2, a state-of-the-art SVM solver using the latest known techniques for caching kernel values and choosing example pairs. Although SVQP2 is the main SVM solver implemented in Lush, it does not rely on the Lush facilities and can be used as a standalone library. It is available in the Lush CVS repository: